Project Motivation

This project was part of our Deep Learning subject assignment, and it was a group effort.

Instead of just solving a technical problem, we wanted to explore something creative — could we teach AI to compose music?

Together, we decided to combine LSTM networks (great for sequence prediction) with Grey Wolf Optimization (GWO) to fine-tune the model’s hyperparameters. The goal was to generate music that sounded melodious and structured, not just random notes.

Building the Model

We worked as a team, dividing tasks between data preparation, coding, and testing.

The Maestro dataset from Google Magenta gave us a rich source of piano recordings aligned with MIDI files.

Our workflow looked like this:

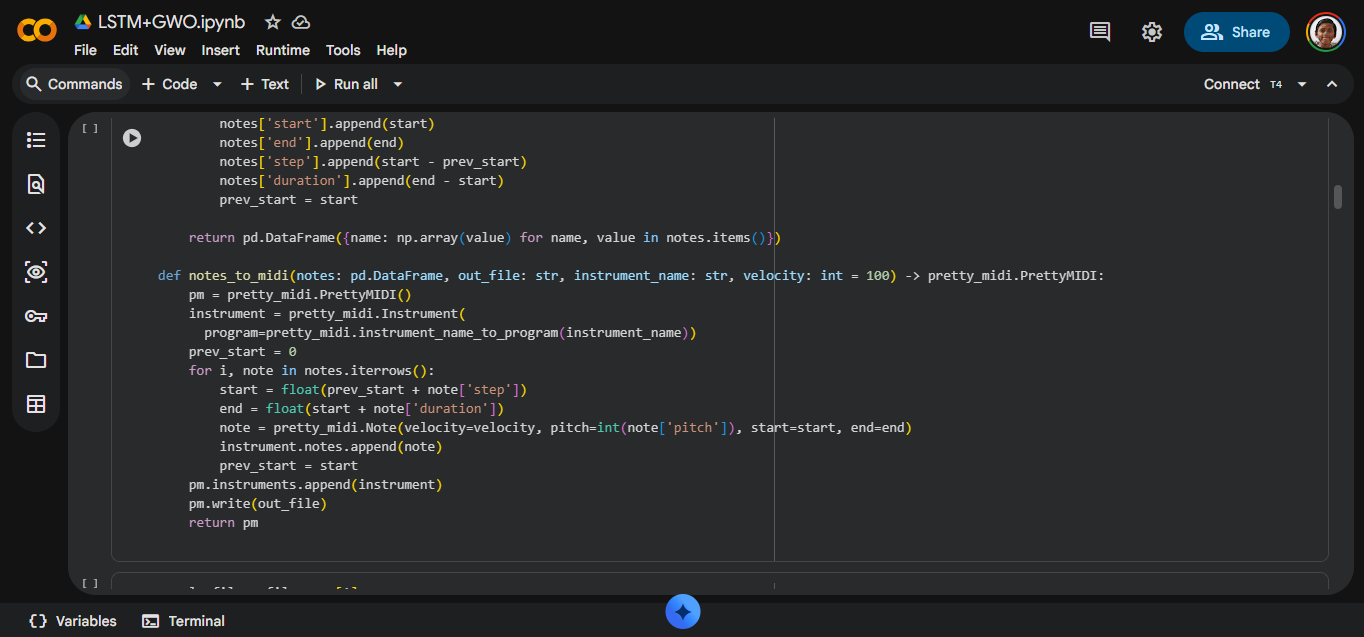

- Data Preparation → Extract notes and chords using the

music21library. - Model Building → Design an LSTM network to predict the next note or chord.

- Optimization → Apply GWO to tune learning rate, batch size, and LSTM units.

- Training → Feed sequences into the model and minimize loss.

- Music Generation → Use a seed input to generate new sequences, then convert them back into MIDI.

Each of us contributed to different phases, and seeing it all come together was rewarding.

Results

At first, the generated outputs were chaotic — random notes with no rhythm.

But once GWO optimization was applied, the results improved dramatically:

- Faster convergence during training.

- Lower loss values.

- Generated sequences that sounded closer to human-composed piano music.

Listening to the MIDI files we produced was surreal. It wasn’t perfect, but it was music created by a model we built together.

What We Learned

This project taught us that AI can be creative when guided properly.

- LSTM captured the sequence patterns.

- GWO helped us fine-tune the model to make those patterns more musical.

- Hyperparameter tuning turned out to be the key difference between noise and melody.

Most importantly, working as a group showed us how collaboration makes complex projects achievable. Each of us brought different strengths, and together we built something that felt alive.

Conclusion

Automatic music generation is still evolving, but this project gave us a glimpse of what’s possible when deep learning meets creativity.

For us, it wasn’t just an assignment — it was a chance to blend technology, teamwork, and art into one project.